Code

# Generating example data

set.seed(321)

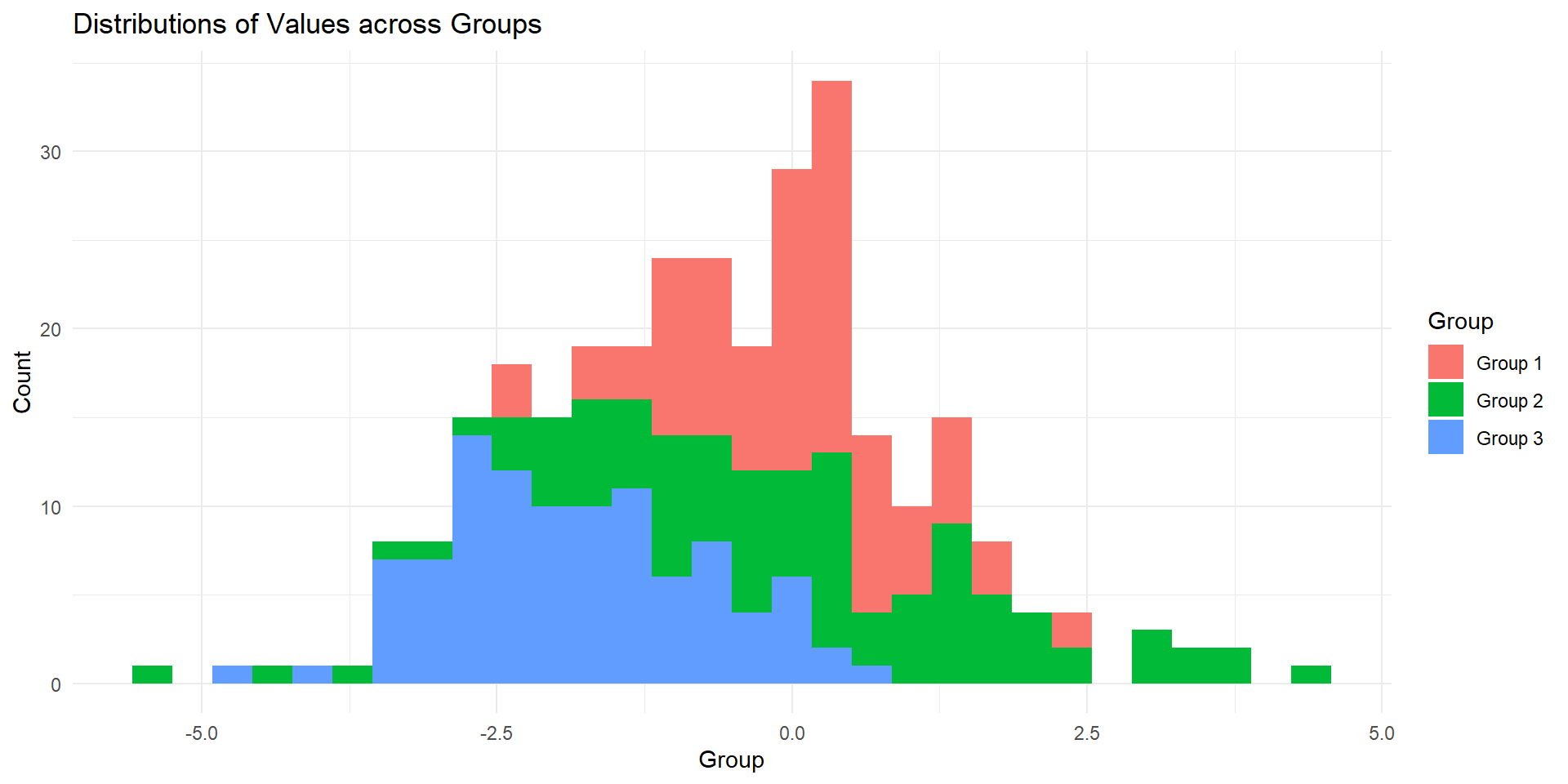

data1 <- rnorm(100, mean = 0, sd = 1) # Distribution 1: Mean 0, SD 1

data2 <- rnorm(100, mean = 0, sd = 2) # Distribution 2: Mean 0, SD 2

data3 <- rnorm(100, mean = -2, sd = 1) # Distribution 3: Mean -2, SD 1

# Creating a data frame for plotting

df <- data.frame(

Group = rep(c("Group 1", "Group 2", "Group 3"), each = 100),

Value = c(data1, data2, data3)

)